There is another term known as ‘Prompt Chaining’ in the context of Prompt Engineering, which is completely different. Kindly refer ‘Prompt Chaining’ article.

This article will explain what CoT prompting is, the world of Chain of Thought prompting deeply, explore its core concepts, how it works, its various types, and its significant advantages and limitations. We will explore practical examples across diverse domains to illustrate its effectiveness and provide a clear understanding for both beginners and seasoned AI enthusiasts.

What is Chain of Thought Prompting (CoT) ?

Chain of Thought (CoT) prompting is a prompt engineering technique designed to improve the reasoning abilities of LLMs, especially for tasks that involve multiple steps or complex logic. Instead of expecting a direct answer, CoT prompting encourages the model to express its thought process, much like a human solving a problem by showing their work. This step-by-step breakdown allows the LLM to process information more effectively, leading to more accurate and coherent responses.

Imagine asking an LLM a complex math problem. Without CoT, it might give a wrong answer directly. With CoT, you instruct it to show its work, detailing each calculation step. This process makes the model’s reasoning transparent and often corrects errors that would otherwise occur.

How Does Chain of Thought Prompting Work?

CoT prompting works by transforming a single, complex problem into a sequence of simpler, interconnected sub-problems. This process mirrors human cognitive strategies for tackling difficult tasks. When an LLM is given a prompt that encourages CoT, it generates intermediate reasoning steps before arriving at a final answer. These steps act as a ‘chain’ of thoughts, guiding the model through a logical progression.

Consider a scenario where an LLM is asked to solve a word problem. Without CoT, the model might attempt to directly compute the answer, potentially overlooking crucial details or making logical leaps. With CoT, the prompt explicitly or implicitly asks the model to:

- Deconstruct the problem: Identify key information and break down the main question into smaller, manageable parts.

- Formulate intermediate steps: Determine the logical sequence of operations or deductions required to solve each sub-problem.

- Execute steps sequentially: Apply knowledge and perform calculations or reasoning for each step.

- Synthesize the final answer: Combine the results from the intermediate steps to produce the ultimate solution.

This structured approach significantly improves the model’s ability to handle complex reasoning tasks, leading to more accurate and reliable outcomes. The effectiveness of CoT prompting is particularly noticeable in larger language models, where it is considered an emergent ability, a capability that becomes apparent as the model’s size and complexity increase.

Standard Prompting vs. Chain of Thought Prompting

To better understand the impact of CoT prompting, let’s compare it with standard prompting:

Standard Prompting:

Standard Prompting:

In standard prompting, the user provides a direct question or instruction, and the LLM responds with a direct answer. There are no explicit instructions for the model to show its reasoning. This can lead to quick but often inaccurate answers for complex problems.

Example:

Prompt: “Roger has 5 tennis balls. He buys 2 more cans of tennis balls. Each can have 3 tennis balls. How many tennis balls does he have now?

LLM Output (without CoT): “The answer is 11.”

In this case, the model might quickly jump to an incorrect answer without fully processing the information.

Chain of Thought Prompting:

With CoT prompting, the user either provides examples of step-by-step reasoning or explicitly instructs the model to explain its reasoning step-by-step. This encourages the model to think through the problem logically.

Example:

Prompt: “Roger has 5 tennis balls. He buys 2 more cans of tennis balls. Each can has 3 tennis balls. How many tennis balls does he have now? Let’s think step by step.

LLM Output (with CoT): “* Roger started with 5 tennis balls.- He buys 2 more cans, and each can has 3 tennis balls, so that’s 2 * 3 = 6 new tennis balls.- In total, Roger has 5 + 6 = 11 tennis balls.- The answer is 11.”

This detailed breakdown allows the model to arrive at the correct answer by following a clear logical path, making the process transparent and verifiable.

Types of Chain of Thought Prompting

CoT prompting can be implemented in several ways, each suited for different scenarios and model capabilities. The main types include Zero-shot CoT, Few-shot CoT, and Automatic CoT (Auto-CoT).

Zero-shot Chain of Thought (Zero-shot CoT)

How it works:

The model, upon receiving the instruction, is encouraged to generate intermediate reasoning steps before providing the final answer. This internal monologue helps the model to process the problem more thoroughly.

Example (Arithmetic):

Prompt: “I went to the market and bought 10 apples. I gave 2 apples to the neighbor and 2 to the repairman. I then went and bought 5 more apples and ate 1. How many apples did I remain with? Let’s think step by step.

LLM Output: “* First, you started with 10 apples.- You gave away 2 apples to the neighbor and 2 to the repairman, so you had 10 – 2 – 2 = 6 apples left.- Then you bought 5 more apples, so now you had 6 + 5 = 11 apples.- Finally, you ate 1 apple, so you would remain with 11 – 1 = 10 apples.”

Without the “Let’s think step by step” instruction, the model might have given an incorrect direct answer like “11 apples”. This demonstrates the power of zero-shot CoT in guiding the model towards a correct, reasoned solution.

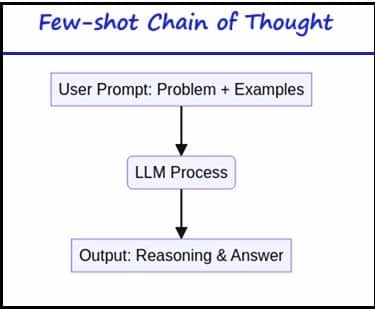

Few-shot Chain of Thought (Few-shot CoT)

How it works:

The prompt includes a few examples where both the problem and its detailed, step-by-step solution (the chain of thought) are provided. The LLM then uses these examples as a guide to generate a similar chain of thought for the new problem it needs to solve.

Example (Logical Reasoning):

Prompt: “The odd numbers in this group add up to an even number: 4, 8, 9, 15, 12, 2, 1. A: Adding all the odd numbers (9, 15, 1) gives 25. The answer is False.The odd numbers in this group add up to an even number: 17, 10, 19, 4, 8, 12, 24. A: Adding all the odd numbers (17, 19) gives 36.

The answer is True.The odd numbers in this group add up to an even number: 16, 11, 14, 4, 8, 13, 24. A: Adding all the odd numbers (11, 13) gives 24.The answer is True.The odd numbers in this group add up to an even number: 17, 9, 10, 12, 13, 4, 2. A: Adding all the odd numbers (17, 9, 13) gives 39. The answer is False.The odd numbers in this group add up to an even number: 15, 32, 5, 13, 82, 7, 1. A:

LLM Output: “Adding all the odd numbers (15, 5, 13, 7, 1) gives 41. The answer is False.”

In this example, the LLM learns the pattern of identifying odd numbers, summing them, and then determining if the sum is even or odd, based on the provided examples [1].

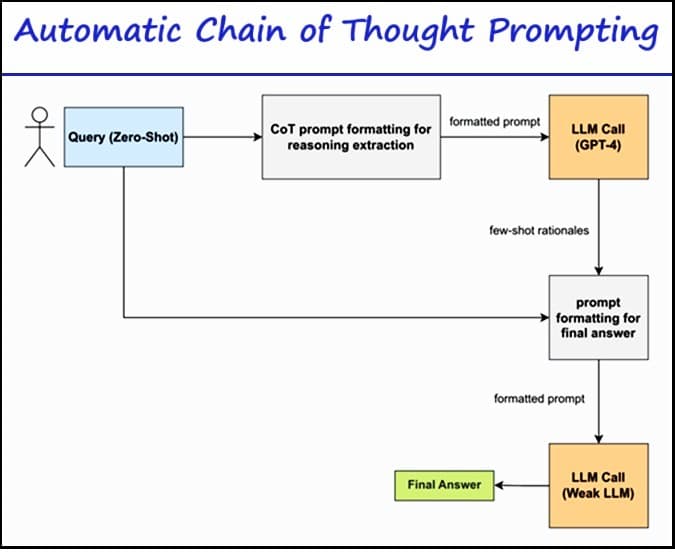

Automatic Chain of Thought (Auto-CoT)

Manually crafting effective and diverse examples for Few-shot CoT can be time-consuming and challenging. Auto-CoT addresses this by automating the process of generating reasoning chains for demonstrations. It leverages LLMs themselves to create these examples.

How it works:

Auto-CoT typically involves two main stages:

- Question Clustering: The dataset of questions is partitioned into several clusters based on similarity.

- Demonstration Sampling: A representative question from each cluster is selected, and its reasoning chain is generated using Zero-shot CoT (e.g., with “Let’s think step by step”) and simple heuristics (like question length or number of reasoning steps). These generated examples then serve as demonstrations for the LLM.

This automated approach helps to create diverse and effective demonstrations, reducing the manual effort involved in CoT prompting.

Advantages of Chain of Thought Prompting

CoT prompting offers several significant benefits that enhance the performance and utility of LLMs:

- Improved Accuracy: By breaking down complex problems into manageable steps, CoT prompting significantly boosts the accuracy of LLMs, especially in tasks requiring multi-step reasoning, such as arithmetic, common sense, and symbolic reasoning.

- Enhanced Transparency and Interpretability: The generated intermediate steps provide a clear view into the model’s reasoning process. This transparency makes it easier for users to understand how the LLM arrived at its conclusion, which is crucial for debugging, auditing, and building trust in AI systems.

- Better Handling of Complex Information: LLMs can process and synthesize complex information more effectively when guided through a logical sequence of thoughts, preventing them from making premature or incorrect assumptions.

- Reduced Hallucinations and Logical Errors: By forcing the model to articulate its reasoning, CoT prompting can help mitigate the generation of factually incorrect or logically inconsistent outputs, often referred to as hallucinations.

- Versatility: CoT prompting is highly flexible and can be applied across a broad range of tasks and domains, from mathematical problem-solving to complex decision-making in AI assistants.

- Educational Value: The step-by-step explanations generated by CoT can be valuable in educational contexts, helping users understand complex concepts through detailed breakdowns.

Limitations of Chain of Thought Prompting

Despite its numerous advantages, CoT prompting also comes with certain limitations that need to be considered:

- Increased Computational Cost: Generating and processing multiple reasoning steps requires more computational power and time compared to standard single-step prompting. This can make CoT prompting more expensive to implement, especially for large-scale applications.

- Reliance on Prompt Quality: The effectiveness of CoT prompting is heavily dependent on the quality and clarity of the prompts provided. Poorly crafted prompts can lead to suboptimal or incorrect reasoning chains.

- Risk of Plausible but Incorrect Reasoning: While CoT aims to improve accuracy, there is still a risk that the model might generate reasoning paths that appear logical but are factually incorrect, leading to misleading conclusions.

- Labor-Intensive for Few-shot CoT: Manually designing effective examples for Few-shot CoT can be complex and time-consuming, requiring a deep understanding of both the problem domain and the LLM’s capabilities.

- Potential for Overfitting: Models might sometimes overfit to the specific style or pattern of reasoning presented in the prompts, which could reduce their generalization capabilities on diverse tasks.

- Challenges in Evaluation: Quantitatively measuring the qualitative improvements in reasoning or understanding can be challenging due to the inherent complexity of human cognition and the subjective nature of evaluating linguistic expressions.

Examples from Various Domains

Chain of Thought prompting’s versatility allows it to be applied across a wide array of fields, significantly enhancing LLMs’ problem-solving capabilities. Here are examples from different domains:

Mathematical Reasoning

CoT prompting excels in arithmetic and mathematical problems by guiding the LLM through each calculation step, preventing errors that often occur when models attempt to solve such problems in a single go.

Example:

Prompt: “The total bill at a restaurant was $75. If a 15% tip was added, what was the final amount? Let’s calculate step by step.

LLM Output: “* First, calculate the tip amount: 15% of $75.- 0.15 * 75 = 11.25.- The tip amount is $11.25.- Next, add the tip to the original bill: $75 + $11.25.- 75 + 11.25 = 86.25.- The final amount was $86.25.”

Logical Reasoning

For problems requiring deductive reasoning, CoT helps LLMs establish clear logical connections between statements to arrive at a sound conclusion.

Example:

Prompt: “If all birds can fly, and a penguin is a bird, can a penguin fly? Let’s reason step by step.

LLM Output: “* Premise 1: All birds can fly.- Premise 2: A penguin is a bird.- However, this is a trick question. While the premise states all birds can fly, in reality, penguins are birds that cannot fly.- Therefore, based on real-world knowledge, a penguin cannot fly.”

(Note: This example highlights how LLMs can sometimes override explicit premises with real-world knowledge, which can be both an advantage and a challenge depending on the task. A more constrained logical reasoning problem would stick strictly to the premises.)

Commonsense Reasoning

CoT helps LLMs navigate everyday scenarios and make inferences based on common knowledge, which is crucial for tasks like dialogue systems and content generation.

Example:

Prompt: “Sarah has a glass of water. She pours it into an empty bowl. What is the shape of the water now? Let’s think step by step.

LLM Output: “* Water is a liquid.- Liquids take the shape of their container.- Sarah poured the water from a glass into an empty bowl.- Therefore, the water will now take the shape of the bowl.”

Language Understanding and Analysis

CoT can be used to analyze text, summarize complex documents, or explain nuances in language by breaking down the interpretation process.

Example (Sentiment Analysis with Explanation):

Prompt: “Analyze the sentiment of the following review and explain your reasoning: ‘The movie started slow, but the plot twists in the second half were absolutely brilliant! I was on the edge of my seat.’ Let’s break down the sentiment.

LLM Output: “* The first part ‘The movie started slow’ indicates a slightly negative or neutral initial sentiment.- However, the phrase ‘but the plot twists in the second half were absolutely brilliant!’ expresses strong positive sentiment.- ‘I was on the edge of my seat’ further reinforces a highly positive and engaging experience.- Combining these points, the overall sentiment is overwhelmingly positive, as the positive aspects significantly outweigh the initial minor negative point.”

Code Generation and Debugging

In programming, Chain of Thought prompting can guide LLMs to generate code by first outlining the logic and then translating it into code, or to debug by tracing execution flow.

Example (Code Generation):

Prompt: “Write a Python function that calculates the factorial of a given number. Think step by step how to approach this.

LLM Output:

“* Step 1: Understand Factorial: The factorial of a non-negative integer n is the product of all positive integers less than or equal to n. It’s denoted by n!. For example, 5! = 5 * 4 * 3 * 2 * 1 = 120. The factorial of 0 is 1.

Step 2: Define Function Signature: The function will take one argument, n, which is the number.

Step 3: Handle Base Cases: If n is 0, return 1. If n is negative, it’s an invalid input, so we might raise an error or return a specific value.

Step 4: Implement Iterative Calculation: For n > 0, initialize a result variable to 1. Loop from 1 to n (inclusive), multiplying result by the current loop number in each iteration.Step 5: Return Result: After the loop, return the result.def factorial(n):

if not isinstance(n, int) or n < 0:

raise ValueError(“Input must be a non-negative integer”)

if n == 0:

return 1

else:

result = 1

for i in range(1, n + 1):

result *= i

return result

FAQs

1. What is Chain of Thought Prompting in simple terms?

Answer: Chain of Thought Prompting (CoT) is a technique that guides AI models like GPT-4 or Gemini to explain their reasoning step-by-step before producing a final answer. It improves accuracy, transparency, and helps the AI mimic human-like logical thinking.

2. How does Chain of Thought Prompting improve AI reasoning?

Answer: CoT prompting encourages large language models to generate intermediate reasoning steps, reducing logical errors and increasing clarity. It’s particularly useful for math problems, coding, and decision-making tasks.

3. What are different types of Chain of Thought Prompting?

Answer: The main types are Zero-Shot CoT, Few-Shot CoT, and Automatic CoT (Auto-CoT).

-

Zero-Shot uses reasoning cues like “Let’s think step by step.”

-

Few-Shot includes example reasoning paths.

-

Auto-CoT automates reasoning path generation.

4. What is the difference between Chain of Thought Prompting and Prompt Chaining?

Answer: Chain of Thought Prompting focuses on reasoning within a single prompt, while Prompt Chaining connects multiple prompts sequentially, where the output of one becomes the input for the next. Both aim for better reasoning, but at different levels.

5. Can I use Chain of Thought Prompting in ChatGPT, Gemini, or Claude?

Answer: Yes. CoT prompting works across AI models like GPT-3.5, GPT-4, Gemini, and Claude 3. More advanced models tend to generate clearer reasoning chains, especially when guided with structured CoT prompts.

Conclusion

Chain of Thought prompting represents a significant leap forward in enhancing the reasoning capabilities of Large Language Models. By encouraging LLMs to articulate their thought processes step-by-step, Chain of Thought prompting not only improves the accuracy and reliability of their outputs but also provides invaluable transparency into their decision-making. From simple arithmetic to complex logical deductions and even code generation, CoT empowers AI to tackle a broader spectrum of challenging tasks with greater proficiency.

While challenges such as increased computational cost and the need for high-quality prompts exist, the continuous development of techniques like Zero-shot CoT, Few-shot CoT, and Auto-CoT is paving the way for more intelligent, interpretable, and versatile AI systems. As LLMs continue to evolve, Chain of Thought prompting will undoubtedly remain a cornerstone technique for unlocking their full potential and fostering a deeper understanding of how these powerful models ‘think’.

References

Chain-of-Thought (CoT) Prompting. https://www.promptingguide.ai/techniques/cot

What is chain of thought (CoT) prompting?. https://www.ibm.com/think/topics/chain-of-thoughts

Need to have a good hold on Chain Of thought Prompting?, kindly go through Chain-of-thought Prompting Quiz.

Standard Prompting:

Standard Prompting: