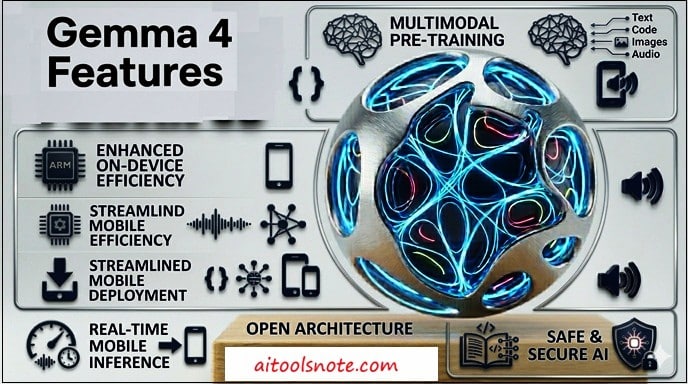

Gemma 4 Features You Must Know in 2026

In this article, we will walk through every key Gemma 4 feature you must know, along with practical use cases and how to get started today.

What Is Gemma 4?

Gemma 4 is a family of open-weight generative AI models developed by Google DeepMind. Unlike proprietary models locked behind APIs, Gemma 4 models come with open weights, allowing developers to download, fine-tune, and deploy them in their own applications without restrictions.

The model family is released in four distinct sizes:

-

Gemma 4 E2B: Effective 2B parameters, designed for ultra-mobile and browser deployment

-

Gemma 4 E4B: Effective 4B parameters, targeting edge devices and on-device apps

-

Gemma 4 26B A4B: 26B Mixture of Experts (MoE) for high-throughput reasoning

-

Gemma 4 31B Dense: Full 31B dense model, bridging server-grade performance with local execution

What sets Gemma 4 apart is not just size, but it is the architecture decisions, multimodal breadth, and agentic capabilities baked into every variant.

Gemma 4 Model Architecture: What Powers It

Understanding the architecture helps developers make smarter deployment decisions. Gemma 4 is built on several cutting-edge innovations:

-

Alternating Attention Layers: Gemma 4 alternates between local sliding-window attention and global full-context attention. Smaller models use 512-token sliding windows while larger models use 1024 tokens, balancing speed and accuracy.

-

Dual RoPE Configuration: Uses standard RoPE for sliding layers and proportional RoPE for global layers, enabling longer, coherent context understanding.

-

Per-Layer Embeddings (PLE): A second embedding table feeds a small residual signal into every decoder layer — maximizing parameter efficiency especially in E2B and E4B variants.

-

Shared KV Cache: The last N layers reuse key-value states from earlier layers, eliminating redundant computations and improving inference speed significantly.

-

Variable-Resolution Vision Encoder: Uses learned 2D positions and multidimensional RoPE. Supports five token budget options (70, 140, 280, 560, or 1120 tokens per image) to balance speed, memory, and quality.

This architecture explains why Gemma 4’s 26B MoE model only activates 4B parameters per token during generation, yet still delivers near-frontier performance.

Gemma 4 Features #1: True Multimodal Intelligence

One of the most impressive upgrades in Gemma 4 is its native multimodal capability across the entire model family. Every Gemma 4 variant supports:

-

Text input and generation: core LLM capability with deep reasoning

-

Image understanding: object detection, document/PDF parsing, screen and UI understanding, chart comprehension, OCR (including multilingual), handwriting recognition, and visual pointing

-

Video understanding: analyze video content by processing sequences of frames

-

Interleaved multimodal input: freely mix text and images in any order within a single prompt

The E2B and E4B models go even further by adding native audio input, supporting speech recognition and real-time audio understanding. This means a small 2B-effective model running on your Android phone can process a voice query, look at an image, and generate an intelligent response entirely offline.

For Java developers, this unlocks possibilities like building Spring Boot microservices that accept document images, invoices, or screenshots and return intelligent structured data without any third-party OCR service.

Feature #2: Configurable Thinking Mode (Built-in Reasoning)

Gemma 4 introduces a configurable thinking mode, a built-in reasoning layer that allows the model to think step-by-step before generating a final answer. This is similar in concept to chain-of-thought prompting, but now it is natively baked into the model architecture itself.

This feature matters for several reasons:

-

Complex coding problems benefit from structured reasoning before generating code

-

Multi-step math and logic tasks become significantly more accurate

-

Agentic workflows require deliberate planning, not reactive responses

Unlike proprietary alternatives, Gemma 4’s thinking mode is configurable, meaning developers can enable or disable it depending on the use case. For latency-sensitive applications, you may want fast responses without deep thinking. For complex document analysis or multi-step agent tasks, you can enable full reasoning.

Feature #3: Massive Context Window

Gemma 4 dramatically increases context window capacity compared to previous generations:

-

E2B and E4B models: 128K token context window

-

26B MoE and 31B Dense models: 256K token context window

To put this in perspective, a 256K token context window can hold approximately 200,000 words, roughly the equivalent of two full-length novels or an entire Java enterprise codebase. For developers, this means:

-

Feed entire Spring Boot project files for architectural analysis

-

Process full PDF documents, legal contracts, or technical specifications in one shot

-

Maintain extremely long multi-turn conversations without losing earlier context

-

Analyze complete codebases for security audits or refactoring recommendations

Legal document analysis, financial report processing, and long technical documentation review are all practical use cases that become effortless with a 256K context window.

Feature #4: Enhanced Coding and Agentic Capabilities

Gemma 4 achieves notable improvements in coding benchmarks and introduces native function-calling support, two capabilities that together power highly capable autonomous agents.

Coding Capabilities

-

Code generation, completion, and correction

-

High-quality offline code generation, turning any workstation into a local AI code assistant

-

Works without internet, meaning your proprietary code never leaves your system

Function Calling and Agentic Workflows

Native function calling allows Gemma 4 to interact with external tools and APIs in a structured, reliable way. A real example from the model card shows Gemma 4 E2B reasoning through a multi-step task: identifying a city from an image, then autonomously calling a get_weather(city) tool, all without custom fine-tuning.

For enterprise developers, this means you can build AI agents that:

-

Query your database via custom tool definitions

-

Trigger REST API calls based on user intent

-

Process structured and unstructured data within a single pipeline

-

Automate customer support or document workflows

The 31B model currently ranks #3 on the Arena AI text leaderboard among all open models globally, and the 26B MoE model holds #6, outcompeting models 20 times its size.

Feature #5: Native System Prompt Support

For the first time in the Gemma family, Gemma 4 introduces native support for the system role. This might sound minor, but it is a significant quality-of-life improvement for production deployments.

System prompt support enables:

-

More structured conversations: define the model’s persona, constraints, and behavior upfront

-

Controllable outputs: enforce tone, format (JSON, Markdown, plain text), or response length

-

Multi-agent architectures: assign different roles to different model instances within the same pipeline

-

Better instruction following: the model understands and respects system-level directives natively

Previously, developers had to work around Gemma’s lack of native system prompt support with creative formatting hacks. With Gemma 4, this is now a first-class feature, making it far more production-ready for chatbot and agent use cases.

Feature #6: On-Device and Edge-First Design

Gemma 4’s small variants E2B and E4B are purpose-built for edge deployment on smartphones, IoT devices, Raspberry Pi, and browsers. The “E” in E2B and E4B stands for “effective” parameters, meaning the models pack maximum intelligence into minimum hardware footprint.

Memory requirements at 4-bit quantization:

This means Gemma 4 E2B can run on a smartphone with 4GB RAM when quantized, while Gemma 4 E4B fits comfortably on laptops with 8GB VRAM.

Key edge deployment advantages include:

-

Full offline operation: no internet dependency, no API costs

-

Data privacy by design: your data never leaves the device

-

Low latency: no round-trip to a remote server

-

AICore integration: access Gemma 4 on Android through the new AICore Developer Preview

Feature #7: Multilingual Support (140+ Languages)

Gemma 4 is built for a truly global audience, with native support for over 140 languages. This is not an afterthought, the multilingual capability is deeply integrated into the model’s training and works seamlessly across all modalities.

Practical applications include:

-

Building multilingual customer support chatbots without language-specific fine-tuning

-

Real-time translation for mobile apps operating fully offline

-

Global content localization for EdTech and publishing platforms

-

Multilingual OCR for processing documents in diverse scripts and languages

For content creators targeting international audiences or developers building products for global markets, this feature eliminates the need for separate localization pipelines.

Gemma 4 vs Previous Generations: What Changed?

Here is a quick look at how Gemma 4 compares to Gemma 3 in key dimensions:

Gemma 4 is not just an incremental improvement. It is a generational leap in open-model capability.

Real-World Use Cases for Developers

Given Gemma 4’s feature set, here are the most compelling real-world applications:

-

Local AI code assistant: Run Gemma 4 31B locally to generate, review, and debug Java or Spring Boot code without sending proprietary code to cloud APIs.

-

On-device smart assistant: Use E2B/E4B to build offline voice and vision-powered assistants for Android apps

-

Intelligent document processing: Parse invoices, PDFs, and contracts using Gemma 4’s vision + long context capabilities

-

Enterprise AI agents: Use native function calling to build multi-step automation agents that interact with internal APIs

-

Multilingual customer support: Deploy Gemma 4 with 140+ language support for global support automation

-

EdTech personalization: Build adaptive learning tools that reason through student queries step-by-step using thinking mode

-

Fraud detection and financial analysis: Run locally in regulated environments for on-premise, privacy-compliant AI analysis

How to Get Started with Gemma 4

Getting hands-on with Gemma 4 is straightforward. Here are the main options:

Option 1: Try in the Browser (No Installation)

Visit Google AI Studio or Hugging Face Spaces. Several hosted demos of Gemma 4 are available to test immediately without any setup.

Option 2: Download via Kaggle or Hugging Face

Gemma 4 model weights are available on both Kaggle and Hugging Face. Download your preferred size and quantization level and integrate with your existing Python or Java-based AI pipeline.

Option 3: Run Locally with Ollama

Install Ollama on your machine (Mac, Windows, or Linux) and run:

ollama run gemma4:e4b

This pulls the model and runs it locally with a single command. No API key, no internet needed once downloaded.

Option 4: On Android via AICore Developer Preview

Access Gemma 4 on Android through the new AICore Developer Preview for building on-device AI apps.

Option 5: Fine-Tune with Unsloth

For custom fine-tuning on your own dataset, Unsloth provides optimized support for all Gemma 4 variants.

FAQs

Q1. What is Gemma 4 and who developed it?

Gemma 4 is an open-weight generative AI model family developed by Google DeepMind, released on April 2, 2026. It is Google’s most capable open model to date, supporting text, image, audio, and video understanding across four model sizes.

Q2. Is Gemma 4 free to use?

Yes, Gemma 4 is completely free. The model weights are openly available on Hugging Face and Kaggle, and you can run them locally without any API costs or subscription fees.

Q3. What are the available Gemma 4 model sizes?

Gemma 4 comes in four variants: E2B (Effective 2B), E4B (Effective 4B), 26B MoE (Mixture of Experts), and 31B Dense. Each is optimized for different deployment environments, from smartphones to high-end servers.

Q4. Can Gemma 4 run on a laptop or smartphone?

Yes. The E2B model requires only ~3.2 GB RAM at 4-bit quantization, making it suitable for smartphones. The E4B model runs comfortably on laptops with 8 GB VRAM. Both models support fully offline operation.

Q5. What is the context window size in Gemma 4?

The E2B and E4B models support a 128K token context window, while the larger 26B MoE and 31B Dense models support up to 256K tokens equivalent to approximately 200,000 words.

Q6. Does Gemma 4 support multimodal inputs like images and audio?

Yes. All Gemma 4 models natively support image and video understanding. Additionally, the E2B and E4B edge models support native audio input, enabling voice-driven applications entirely on-device.

Q7. What is the “thinking mode” in Gemma 4?

Thinking mode is a built-in configurable reasoning layer that allows Gemma 4 to reason step-by-step before producing a final response. It can be enabled for complex tasks like multi-step coding or math, and disabled for latency-sensitive applications.

Q8. How does Gemma 4 compare to other open-source models like Llama 4?

Gemma 4’s 31B Dense model currently ranks #3 on the Arena AI text leaderboard among all open models globally, while the 26B MoE holds #6 — outperforming many models significantly larger in parameter count.

Q9. Can Gemma 4 be used for building AI agents?

Absolutely. Gemma 4 includes native function calling and system prompt support, making it well-suited for building autonomous multi-step agents that interact with external tools, APIs, and databases without custom fine-tuning.

Q10. How do I get started with Gemma 4?

You can try Gemma 4 instantly via Google AI Studio or Hugging Face Spaces in your browser. For local deployment, use Ollama with the command ollama run gemma4:e4b. Weights are also available on Kaggle and Hugging Face for custom integration and fine-tuning.

Conclusion

Gemma 4 marks a turning point for open-source AI. With its configurable thinking mode, native multimodal intelligence, 256K context window, native function calling, and support for 140+ languages, all in a family of models that can run on a smartphone. It delivers frontier-level capabilities to any developer, anywhere.

For developers and technical content creators, Gemma 4 is particularly compelling: it runs entirely offline, keeps your code private, integrates natively with agentic workflows, and is completely free to use. Whether you are building a custom AI tool, a custom GPT for any purpose, or an intelligent document processor for your audience, Gemma 4 gives you the building blocks without the cloud bill.

The open-source AI era is no longer about catching up to proprietary models with Gemma 4, it has officially arrived.

Sources:

https://ai.google.dev/gemma/docs/core

https://blog.google/innovation-and-ai/technology/developers-tools/gemma-4/

https://ai.google.dev/gemma/docs/core/model_card_4

https://developers.googleblog.com/bring-state-of-the-art-agentic-skills-to-the-edge-with-gemma-4/

If you are looking for Google’s premium cloud API features instead, check out our guide on the top Gemini 3 Pro features you must know.