Mastering AI Workflows: A Comprehensive Guide to Prompt Chaining

However, even the most advanced LLMs can struggle with highly complicated or multi-faceted tasks when given a single, monolithic prompt. This is where Prompt Chaining emerges as a powerful and elegant solution.

Prompt Chaining is a refined technique that involves breaking down a large, complex task into a series of smaller, more manageable subtasks. Each subtask is then handled by a dedicated prompt, and the output from one prompt serves as the input for the next in a sequential flow. This method significantly enhances the reliability, accuracy, and controllability of LLM applications, allowing them to deal with problems that would otherwise be beyond their capabilities.

There is another term known as ‘Chain Of Thought Prompting’ in the context of Prompt Engineering, which is completely different. Kindly refer a separate article on ‘Chain Of Thought Prompting’.

This comprehensive guide will explain Prompt Chaining, explaining its core concepts, how it works, its numerous benefits, and potential limitations. We will explore various real-world examples across different domains, complemented with clear diagrams and flowcharts. By the end of this article, you will have a solid understanding of Prompt Chaining and how to leverage it to unlock the full potential of LLMs.

What is Prompt Chaining?

Prompt Chaining is a strategic approach to interacting with LLMs. Instead of trying to accomplish everything with one elaborate prompt, you design a sequence of prompts, each focusing on a specific part of the overall task. Think of it like a manufacturing assembly line, where each station performs a particular step, and the partially finished product moves to the next station for further processing until the final product is complete.

“Prompt chaining is a natural language processing (NLP) technique, which leverages large language models (LLMs) that involves generating a desired output by following a series of prompts. In this process, a sequence of prompts is provided to an NLP model, guiding it to produce the desired response. The model learns to understand the context and relationships between the prompts, enabling it to generate meaningful, consistent, and contextually rich text.”

This technique is an advanced implementation of prompt engineering, allowing for more refined control over the LLM’s output. By breaking down the problem, you guide the model through a logical progression, ensuring that each step is executed accurately before moving to the next.

Think of it like this:

Instead of asking “Write a marketing plan for my startup,”

you guide the AI step-by-step:

- “Analyze the startup’s target audience.”

- “Suggest marketing goals based on that audience.”

- “Propose strategies for each goal.”

- “Create a complete marketing plan combining the above.”

Each step refines the AI’s focus, leading to clearer, more accurate, and contextually rich results.

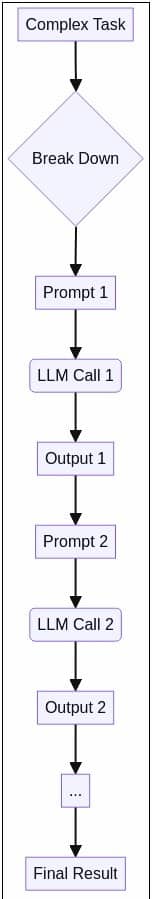

How Does Prompt Chaining Work?

Let’s visualize this workflow:

Here’s a step-by-step breakdown of how Prompt Chaining typically works:

1. Task Decomposition: The initial, complex task is carefully analyzed and broken down into a series of logical, sequential subtasks. This requires a clear understanding of the overall objective and the intermediate steps needed to reach it.

2. First Prompt Execution: The first subtask is formulated into a clear and concise prompt. This prompt is sent to the LLM, which processes it and generates an initial output.

3. Output as Input: The output from the first LLM call is then captured and, if necessary, pre-processed or formatted to be suitable as input for the next prompt.

4. Subsequent Prompt Execution: The modified output from the previous step is combined with the next subtask’s instructions to form a new prompt. This new prompt is then sent to the LLM.

5. Iteration: Steps 3 and 4 are repeated for each subsequent subtask in the chain. Each LLM call builds upon the results of the previous one, progressively moving closer to the final solution.

6. Final Output Generation: Once all subtasks have been processed through their respective prompts, the final output of the last prompt in the chain represents the complete solution to the initial complex task.

This methodical approach ensures that the LLM focuses on one specific objective at a time, reducing the cognitive load and minimizing the chances of errors or hallucinations that might occur with a single, overly complex prompt.

Example Analogy

Imagine a chef following a recipe:

-

Gather ingredients.

-

Chop vegetables.

-

Cook and plate the dish.

Instead of doing everything mentally at once, the chef follows a clear chain of steps. Prompt Chaining works the same way for AI.

Why Use Prompt Chaining? The Benefits

Prompt Chaining offers a multitude of advantages that make it a preferred technique for advanced LLM applications:

1. Enhanced Accuracy and Reliability

By breaking down complex tasks into smaller, more manageable steps, Prompt Chaining allows the LLM to focus its processing power on each subtask individually. This reduces the likelihood of the model making errors or misinterpreting instructions, leading to more accurate and reliable outputs for the overall task. Each step can be fine-tuned and validated, ensuring higher quality results.

2. Improved Transparency and Debuggability

One of the significant challenges with LLMs is understanding how they arrive at their conclusions. Prompt Chaining provides a clear, step-by-step workflow, making the entire process more transparent. If an error occurs, you can easily identify which specific prompt in the chain is causing the issue, allowing for targeted debugging and refinement. This modularity makes it easier to analyze and improve performance at different stages.

3. Greater Control and Flexibility

Prompt Chaining gives developers and users granular control over the LLM’s behavior at each stage of a complex task. You can tailor individual prompts to specific requirements, guiding the model to produce precise outputs. This flexibility is invaluable for adapting LLM applications to diverse workflows and ensuring the generated content aligns perfectly with user expectations. For instance, in a text summarization system, you can control the level of detail and specificity at each stage [2].

4. Scalability and Modularity

Complex tasks often involve multiple operations or transformations. Prompt Chaining allows you to build scalable solutions by creating modular components (individual prompts) that can be reused or modified independently. This modularity simplifies maintenance and allows for easier integration of new functionalities or adjustments to existing workflows without disrupting the entire system.

5. Reduced Hallucinations

LLMs are sometimes prone to think out loud” by generating a series of intermediate reasoning steps. This internal monologue helps the model to process the problem more thoroughly and arrive at a more accurate final answer. CoT is primarily about improving the reasoning capabilities within a single, extended interaction with the LLM.

Limitations of Prompt Chaining

Despite its significant advantages, Prompt Chaining is not without its challenges and limitations:

1. Increased Complexity in Design and Management

Designing an effective prompt chain requires careful planning and a deep understanding of the overall task and its subcomponents. Each prompt needs to be precisely crafted to achieve its specific subtask and to ensure its output is compatible with the input requirements of the next prompt. Managing multiple prompts and their interdependencies can become complex, especially for very long or branching chains.

2. Higher Latency and Computational Cost

Since Prompt Chaining involves multiple sequential calls to the LLM, the total execution time and computational resources required will be higher than a single LLM call. Each interaction with the LLM incurs latency and cost, which can accumulate rapidly in extensive chains. This makes it less suitable for applications requiring extremely low latency or operating under strict budget constraints.

3. Error Propagation

An error or suboptimal output from an early stage in the prompt chain can propagate through subsequent stages, potentially leading to a completely incorrect final result. Debugging such issues can be challenging, as the error might originate several steps back in the chain. Robust error handling and validation mechanisms are crucial to mitigate this risk.

4. Context Window Limitations

While Prompt Chaining helps manage complexity, each prompt still operates within the LLM’s context window. If the cumulative input (including previous outputs in the chain) becomes too large, it can exceed the model’s context limit, leading to truncation or loss of information. Careful management of information flow and summarization at intermediate steps might be necessary.

5. Over-specification Risk

There’s a fine line between providing enough guidance and over-specifying the task. If prompts are too rigid or prescriptive, they might stifle the LLM’s creativity or ability to handle unexpected variations. Finding the right balance between control and flexibility is key.

Real-World Examples and Use Cases

Prompt Chaining is incredibly versatile and finds applications across numerous industries and tasks. Here are some real-world examples demonstrating its power:

1. Document Question Answering (Document QA)

This is a classic example where Prompt Chaining shines. Instead of asking an LLM to directly answer a question about a very long document (which might exceed its context window or lead to inaccuracies), you can chain prompts to achieve a more reliable outcome.

- Prompt 1 (Information Extraction): “Extract all sentences or paragraphs from the following document that are relevant to the question: [Question]. Document: [Document Content]. Output the relevant text in bullet points.”

- Prompt 2 (Summarization/Answering): “Using the extracted information: [Output from Prompt 1] and the original question: [Question], provide a concise and accurate answer. Ensure the answer is friendly and helpful.”

This approach ensures that the LLM first focuses on identifying pertinent information and then on synthesizing it into a coherent answer, significantly improving accuracy for long documents.

2. Content Creation and Marketing

Generating high-quality, structured content often involves multiple steps, making it an ideal candidate for Prompt Chaining.

- Prompt 1 (Outline Generation): “Generate a detailed outline for a blog post about [Topic], including an introduction, 3-4 main sections with sub-points, and a conclusion.”

- Prompt 2 (Section Expansion): “Expand on the first main section of the following outline: [Section 1 from Output of Prompt 1]. Write 300 words, focusing on [Key Aspect].”

- Prompt 3 (Tone Adjustment/Refinement): “Review the following text: [Output from Prompt 2]. Rewrite it to be more engaging and persuasive, targeting a [Target Audience].”

This chain allows for iterative content development, ensuring each part of the content meets specific requirements and quality standards.

3. Data Analysis and Reporting

Prompt Chaining can streamline complex data analysis workflows, especially when dealing with unstructured text data.

- Prompt 1 (Data Extraction): “From the following customer feedback comments, extract all mentions of product features and categorize their sentiment (positive, negative, neutral). Comments: [Customer Feedback Data].”

- Prompt 2 (Summary and Insights): “Based on the extracted features and sentiments: [Output from Prompt 1], summarize the key positive and negative feedback points for each feature. Identify the top 3 most praised and top 3 most criticized features.”

- Prompt 3 (Report Generation): “Generate a brief executive summary and recommendations based on the following insights: [Output from Prompt 2].”

This chain transforms raw feedback into actionable business intelligence.

4. Customer Support Automation

Automated customer support systems can leverage Prompt Chaining to handle inquiries more effectively, providing personalized and accurate responses.

- Prompt 1 (Intent Classification): “Classify the user’s query: [User Query] into one of the following categories: [List of Categories, e.g., ‘Billing’, ‘Technical Support’, ‘Product Information’, ‘Order Status’].”

- Prompt 2 (Information Retrieval/Drafting): “Based on the classified intent: [Output from Prompt 1] and the user’s query: [User Query], retrieve relevant information from our knowledge base and draft a preliminary response. If the intent is ‘Order Status’, ask for the order number.”

- Prompt 3 (Refinement/Personalization): “Refine the drafted response: [Output from Prompt 2] to be more empathetic and address the user by their name: [User Name]. Ensure all necessary information is included.”

This allows for dynamic and context-aware responses, improving customer satisfaction.

5. Code Review and Refactoring

Developers can use Prompt Chaining to automate parts of their code review and refactoring processes.

- Prompt 1 (Code Analysis): “Analyze the following Python code for potential bugs, security vulnerabilities, and adherence to best practices. Code: [Python Code].”

- Prompt 2 (Suggestion Generation): “Based on the analysis: [Output from Prompt 1], suggest specific improvements or fixes for the identified issues. Provide code snippets for each suggestion.”

- Prompt 3 (Documentation Update): “Generate or update the docstrings and comments for the following code, incorporating the suggested changes: [Original Code + Output from Prompt 2].”

This chain can significantly speed up development cycles and improve code quality.

6. Prompt Chaining in Healthcare

For a healthcare chatbot or clinical assistant:

“List common symptoms of Type 2 diabetes.”

“Explain how to diagnose Type 2 diabetes.”

“Recommend lifestyle changes for newly diagnosed patients.”

“Summarize in patient-friendly language.”

By chaining these, the AI provides structured medical guidance while maintaining clarity for non-expert users.

7. Prompt Chaining for Software Development

As a Java developer, you can use prompt chaining for technical problem-solving.

“Explain the problem of thread safety in Java.”

“List strategies to make a class thread-safe.”

“Write a Java example using synchronized blocks.”

“Review the code for possible optimizations.”

Each prompt deepens the AI’s understanding, leading to accurate, efficient code assistance.

Types of Prompt Chaining Patterns

1. Linear Chain

Each prompt feeds directly into the next.

Ideal for deterministic multi-step tasks (e.g., extract → analyze → summarize).

2. Branching Chain

Conditional flow based on results.

If Intent = Refund → Refund Flow Else If Intent = Shipping → Shipping Flow

Best for decision trees or rule-based workflows.

3. Iterative Chain (Refinement Loop)

Generate → Critique → Improve → Finalize.

Excellent for creative tasks like story writing, coding, marketing copy.

4. Graph-Based Chains (Advanced)

Multiple paths running in parallel, managed by frameworks like LangGraph or LangChain.

Best Practices for Effective Prompt Chaining

To maximize the benefits of Prompt Chaining and mitigate its limitations, consider these best practices:

1. Clear Task Decomposition: Spend time meticulously breaking down the main task into the smallest, most logical subtasks. Each subtask should have a clear objective.

2. Precise Prompt Engineering for Each Step: Craft each individual prompt with extreme clarity and specificity. Define the expected input, desired output format, and any constraints for that particular step. Use delimiters (e.g., ###, —) to clearly separate instructions from input data.

3. Intermediate Output Validation: Implement mechanisms to validate the output of each step before passing it to the next. This can involve simple checks (e.g., format validation, keyword presence) or more complex logical checks. This helps prevent error propagation.

4. Error Handling and Fallbacks: Design your chain with robust error handling. What happens if an LLM call fails or returns an unexpected output? Implement fallback mechanisms or human intervention points where necessary.

5. Context Management: Be mindful of the LLM’s context window. If intermediate outputs are very long, consider summarizing them before passing them to the next prompt. Only pass essential information to keep the context concise.

6. Iterative Refinement: Prompt Chaining is an iterative process. Start with a simple chain and gradually add complexity. Test each step thoroughly and refine prompts based on the LLM’s performance.

7. Leverage Tools and Frameworks: Utilize prompt orchestration frameworks like LangChain, LlamaIndex, or custom scripts to manage the flow, state, and execution of your prompt chains. These tools provide abstractions and utilities that simplify development.

8. Consider Human-in-the-Loop: For critical applications, integrate human review or approval at key stages of the chain. This combines the efficiency of AI with human oversight, ensuring quality and safety.

When to Use Prompt Chaining?

Use prompt chaining when:

-

The task requires multi-step reasoning (e.g., summarization → analysis → generation).

-

The output of one step depends on the previous.

-

You want modular, reusable prompts for automation or APIs.

Avoid it when:

-

The task is simple and direct (e.g., “Translate this text”).

-

You need instant single-response answers.

How to Build a Prompt Chain?

Here’s a general structure for building your own chain:

-

Define the end goal: What do you want the AI to deliver?

-

Break it into sub-tasks: Each sub-task becomes one prompt.

-

Sequence logically: Ensure each step’s output supports the next.

-

Test and refine: Evaluate responses and tweak prompts.

-

Automate: Once stable, automate via API or tool.

Tools That Support Prompt Chaining

Modern AI tools make chaining easy:

-

LangChain: A Python framework designed for chaining prompts with memory.

-

Microsoft Copilot Studio: Allows task sequencing for business workflows.

-

FlowGPT / PromptFlow (Azure): Visualize prompt dependencies.

-

OpenAI API with Node.js or Python: You can code prompt chains programmatically.

FAQs on Prompt Chaining

Q1. What is Prompt Chaining in simple terms?

It’s a way to make AI complete complex tasks by breaking them into smaller prompts linked together.

Q2. How is Prompt Chaining different from Chain of Thought Prompting?

Chain-of-Thought happens within a single prompt as internal reasoning; Prompt Chaining happens across multiple prompts as external workflow.

Q3. Does Prompt Chaining reduce hallucinations?

Yes, because you can validate each step and correct errors early.

Q4. Which AI tools support Prompt Chaining?

LangChain, LangGraph, AWS Bedrock, OpenAI API, and Anthropic Claude Workflows support it natively or through SDKs.

Q5. What are some good use cases for Prompt Chaining?

Customer support, legal summarization, healthcare intake, SEO content generation, educational tutoring, and data analysis.

Conclusion

Prompt Chaining is a transformative technique that empowers Large Language Models to tackle complex, multi-stage tasks with unprecedented accuracy, control, and transparency. By systematically breaking down problems into a series of interconnected prompts, we can overcome the limitations of single-prompt interactions and unlock new possibilities for AI applications.

From enhancing document question answering and automating content creation to streamlining data analysis and improving customer support, the applications of Prompt Chaining are vast and growing. While it introduces challenges related to design complexity and computational cost, adhering to best practices and leveraging appropriate tools can lead to highly robust and effective AI workflows.

As AI continues to advance, Prompt Chaining will undoubtedly remain a cornerstone of sophisticated prompt engineering, enabling us to build more intelligent, reliable, and versatile AI systems that seamlessly integrate into our daily lives and professional endeavors.

References

https://www.promptingguide.ai/techniques/prompt_chaining

https://www.ibm.com/think/topics/prompt-chaining