Prompt Engineering has become a key skill in the age of AI tools like ChatGPT, Google Gemini, and Claude etc. Among its many techniques, Chain of Thought Prompting (CoT) and Prompt Chaining are two powerful strategies that help AI models reason better and produce structured, logical answers. In the world of prompt engineering, these two methods often confuse beginners.

At first glance, both may sound similar because they deal with step-by-step thinking. But they are not the same. They solve different types of problems in large language models (LLMs).

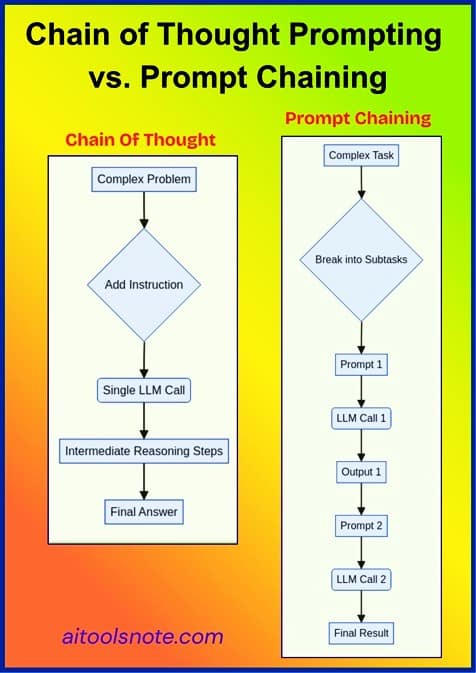

Let’s understand the difference with examples, diagrams, and practical use cases. Even if you are a beginner, by the end of this article, you’ll know exactly when to use which technique.

What to expect from this Article?

In this article, you’ll learn in simple language:

1) What Chain of Thought and Prompt Chaining actually mean

2) Real-world examples of both

3) Key differences (with diagrams)

4) When to use which technique

5) Pros and cons

6) Hybrid strategies (combining both effectively)

By the end, you’ll be able to choose the right technique for your AI workflows or prompt-based applications confidently.

What is Chain of Thought Prompting?

Chain of Thought (CoT) prompting is a technique where the AI is guided to “think step-by-step” before arriving at the final answer. Instead of giving a direct response, the model is encouraged to reason through intermediate steps, just like how a human would solve a math or logic problem.

It’s like telling a student, “Don’t just give me the answer, show your work!”

Instead of asking:

“What is 25 × 4?”

You say:

“Let’s think step by step. First multiply 25 by 2, then by 2 again.”

The model then generates a logical sequence of thoughts before answering.

Visualization

[User Question]

↓

[Model Reasoning Process]

↓

[Step 1 → Step 2 → Step 3]

↓

[Final Answer]

Example 1: Without Chain of Thought

Prompt:

What is 23 × 17?

AI’s Answer:

391.

Simple, but not very transparent. You don’t know how it got there.

Example 2: With Chain of Thought Prompting

Prompt:

Let’s think step by step. What is 23 × 17?

AI’s Answer:

Step 1: 23 × 10 = 230

Step 2: 23 × 7 = 161

Step 3: Add them: 230 + 161 = 391

Final Answer: 391

Here, the model shows its reasoning chain, making the answer interpretable and more reliable.

You may also go through a detailed article on Chain of Thought Prompting with multiple real-time examples.

Why CoT ?

Large Language Models (LLMs) like GPT-4 or Gemini are trained to predict the next word based on the context. By saying “Let’s think step by step,” we activate a more reasoning-oriented behavior, forcing the model to slow down and outline intermediate thoughts.

This improves accuracy, especially for:

- Math word problems

- Logical reasoning

- Coding/debugging

- Multi-step question answering

Input Prompt → Step-by-step reasoning → Intermediate steps → Final Answer

Example

Prompt:

“A store had 23 apples. They used 20 for lunch. Then they bought 6 more. How many apples are left?

Please think step by step.”

Output (CoT reasoning):

-

Start with 23 apples.

-

Use 20 → 23 − 20 = 3.

-

Buy 6 → 3 + 6 = 9.

-

Final answer: 9 apples.

Types of Chain of Thought Prompting

| Type | Description | Example |

|---|---|---|

| Zero-shot CoT | You just add “Let’s think step by step.” | “What’s 35% of 80? Let’s think step by step.” |

| Few-shot CoT | You give examples of step-by-step reasoning. | “Example 1: (show reasoning) … Example 2: (show reasoning) … Now your turn.” |

| Self-Consistency CoT | You let the model generate multiple reasoning paths and pick the most consistent result. | Great for mathematical or logical tasks. |

| Auto-CoT | Automatically generate reasoning examples to train other prompts. | Used in advanced prompt pipelines. |

What is Prompt Chaining?

Prompt Chaining means breaking a complex task into smaller prompts where each prompt’s output becomes the input for the next one. Think of it as a pipeline of prompts, working together like an assembly line.

Real-World Analogy

Imagine you are building a car.

You don’t make the entire car in one go. You:

-

Make the engine

-

Build the body

-

Assemble the parts

Each step depends on the previous — that’s Prompt Chaining.

Example 1: Without Prompt Chaining

Prompt:

Write a blog post about climate change in 500 words, with SEO keywords and a social media caption.

AI’s Answer:

It tries to do everything at once, sometimes okay, but often too generic or unbalanced.

Example 2: With Prompt Chaining

Step 1 Prompt:

Generate 5 SEO keywords related to climate change.

Output:

[“global warming”, “carbon footprint”, “renewable energy”, “climate crisis”, “sustainability”]

Step 2 Prompt:

Using the keywords above, write a 500-word blog post on climate change.

Step 3 Prompt:

Write a short social media caption summarizing the blog.

Now, each step is simpler and more controlled.

You can tweak or improve each stage independently. This is the essence of Prompt Chaining.

Real-World Example#1

Goal: Create a news summary and translate it into French.

Prompt 1: “Summarize this article in 5 bullet points.”

Prompt 2: “Convert these bullet points into a 3-sentence summary.”

Prompt 3: “Translate that summary into French.”

Each prompt handles one mini-task → together they form a prompt chain.

Real-World Example#2: Customer Support Bot

Step 1: Identify the user’s intent.

Step 2: Retrieve relevant FAQs.

Step 3: Generate a friendly response.

Each step can be a prompt, chained into an intelligent chatbot workflow.

IBM and Microsoft both use prompt chaining in their enterprise AI orchestration systems (IBM Research, 2024).

Visualization

[Prompt 1: Subtask A]

↓

[Output 1 → Input 2]

↓

[Prompt 2: Subtask B]

↓

[Output 2 → Input 3]

↓

[Final Result]

This modular approach ensures clarity, reusability, and better control.

You may also go through a detailed article on Prompt Chaining with more examples.

Why Prompt Chaining?

-

Complex tasks often have multiple sub-steps.

-

Makes debugging easier — if one step fails, fix that part.

-

Keeps each prompt short and focused.

-

Enables reusability (you can reuse certain prompts in other chains).

Chain of Thought Prompting vs Prompt Chaining: The Key Differences

| Criteria | Chain of Thought Prompting | Prompt Chaining |

|---|---|---|

| Purpose | Improve reasoning in one prompt | Divide complex workflows into multiple prompts |

| Structure | One prompt + one reasoning process | Multiple prompts linked in sequence |

| Control | Model decides how to reason | You design each step explicitly |

| Transparency | Shows model’s “thoughts” | Shows modular outputs at each stage |

| Complexity | Good for reasoning tasks | Good for workflow tasks |

| Example Use | Solving a logic puzzle | Summarize → Translate → Format pipeline |

| Debugging | You can inspect reasoning chain | You can test each stage independently |

| Performance | Improves reasoning accuracy | Improves workflow reliability |

Example Comparison

| Scenario | Chain of Thought | Prompt Chaining |

|---|---|---|

| Math problem: “If a car travels 60 km/h for 2 hours, how far does it go?” | “Let’s think step by step…” → Calculates directly | Not ideal; task too small |

| Business workflow: “Summarize an article, extract keywords, then write a tweet.” | Possible, but messy in one prompt | Ideal – break into 3 chained prompts |

| Debugging code: “Explain why this code fails.” | Great for reasoning | May need multiple steps like reading logs, fixing, retesting – use chaining |

Combining Both Techniques

In real-world AI systems, both techniques are often used together.

For example, in an AI writing assistant:

-

Each stage (outline → draft → SEO → summary) is prompt chaining.

-

Within each prompt, you can add Chain of Thought reasoning to improve quality.

Example:

Step 2 Prompt: “Let’s think step by step to expand each bullet point logically.”

Thus, you get the best of both worlds: modular automation + intelligent reasoning.

Real-World Scenarios

Scenario#1. Chain of Thought Example: Math Word Problem

Prompt:

“A train leaves at 3 PM and travels 60 km/hr. Another leaves at 4 PM at 80 km/hr on the same track. When do they meet? Let’s think step by step.”

Reasoning chain:

-

The first train has a 1-hour head start → 60 km ahead.

-

Relative speed = 80 − 60 = 20 km/hr.

-

Time to catch up = 60 / 20 = 3 hours.

-

Meeting time = 4 PM + 3 hrs = 7 PM.

This is a classic CoT task.

Scenario#2. Prompt Chaining Example: Resume Parser

Goal: Build an AI tool that extracts and formats resume details.

Prompt 1: Extract name, skills, and experience.

Prompt 2: Classify skills as “technical” or “soft.”

Prompt 3: Generate a formatted summary paragraph.

Each step can be separately improved and reused in other pipelines.

Scenario#2. Combined Approach: Hybrid Method

You can combine both!

Example: Legal document analysis

Prompt 1 → Extract relevant legal clauses.

Prompt 2 → For each clause, apply CoT to reason about implications.

Prompt 3 → Summarize reasoning into a report.

Here, Prompt 2 uses Chain of Thought inside a Prompt Chain.

This hybrid approach is widely used in tools like LangChain, LLM orchestration frameworks, and AI workflow builders.

Visual Summary

When to Use Which?

Use Chain of Thought Prompting when:

-

You want the model to explain its reasoning.

-

The problem involves multi-step logic.

-

You’re asking a single complex question.

Example:

Q: A train leaves at 2 PM and travels 40 km/h. Another train leaves the same station at 3 PM at 60 km/h. When will they meet?

Prompt: “Let’s think step by step.”

Use Prompt Chaining when:

-

You want to automate workflows or multi-stage outputs.

-

The result depends on multiple sequential tasks.

-

You need reusability and modular design.

Example:

Step 1: Generate a blog outline

Step 2: Expand each section

Step 3: Add SEO keywords

Step 4: Generate a title and meta description

This is how content creation pipelines are built using LangChain or Zapier + OpenAI APIs.

| Situation | Best Technique | Why |

|---|---|---|

| Solving math, logic, or reasoning tasks | Chain of Thought | You want the model to “think out loud.” |

| Summarizing, translating, extracting info | Prompt Chaining | Task can be divided into stages. |

| Multi-step reasoning inside a workflow | Hybrid | Combine modular steps with reasoning. |

| When debugging AI responses | Prompt Chaining | Each step can be validated independently. |

| When you want transparency of model thought | Chain of Thought | Model reveals its reasoning. |

Advantages and Limitations

Chain of Thought Prompting

Advantages:

-

Improves reasoning performance

-

Provides interpretability

-

Reduces logical errors

Limitations:

-

Reasoning may still be incorrect (“hallucinated”)

-

Long prompts can increase cost/tokens

-

Requires large models for best results

Prompt Chaining

Advantages:

-

Modular & scalable

-

Easier to maintain and debug

-

Works well for long workflows

Limitations:

-

Propagates early errors down the chain

-

More API calls = higher latency

-

Requires careful design of step-to-step transitions

| Technique | Advantages | Limitations |

|---|---|---|

| Chain of Thought | Improves reasoning; makes outputs explainable; simple to apply | May produce verbose answers; needs careful prompt phrasing |

| Prompt Chaining | Enables workflow automation; better control; reusable | Needs multiple API calls; can be slower; requires orchestration logic |

Best Practices

For Chain of Thought Prompting

-

Use phrases like “Let’s think step by step” or “Show your reasoning.”

-

Use few-shot examples with reasoning steps.

-

For tricky problems, apply self-consistency (generate multiple chains and pick the most common answer).

-

Keep reasoning short to save tokens.

For Prompt Chaining

-

Clearly define inputs and outputs for each step.

-

Use validation rules between prompts.

-

Store intermediate outputs for debugging.

-

Reuse generic steps (e.g. summarization, translation) across workflows.

Common Mistakes to Avoid

-

Overloading Chain of Thought: Too many reasoning steps make it confusing.

-

Breaking CoT into separate prompts: It loses context, CoT should stay in one prompt.

-

Skipping intermediate checks in Prompt Chaining: Always validate outputs before passing them to the next stage.

Real Tools That Use These Techniques

| Tool / Platform | Technique | Description |

|---|---|---|

| LangChain | Prompt Chaining | Builds complex LLM pipelines and agents |

| OpenAI GPTs / Assistants API | Both | Uses reasoning (CoT) and multi-prompt flows |

| Anthropic Claude | CoT | Excels in reasoning and long context analysis |

| IBM watsonx.ai | Prompt Chaining | Enterprise pipelines for AI workflows |

| Google Gemini | CoT | Great at logical reasoning prompts |

Example Prompt Templates

Chain of Thought Template

“You are a reasoning assistant. Solve the following problem.

Show your reasoning step by step before giving the final answer.”

Prompt Chaining Template

Step 1 Prompt: “Extract key entities from the given paragraph.”

Step 2 Prompt: “Summarize these entities into a 100-word report.”

Step 3 Prompt: “Translate the summary into Spanish.”

Key Takeaways

| Insight | Explanation |

|---|---|

| CoT is about internal reasoning | Encourages LLMs to “think” before answering |

| Prompt Chaining is about external structuring | Splits one big problem into smaller tasks |

| They complement each other | You can use both together |

| CoT improves accuracy | Especially in reasoning-heavy domains |

| Chaining improves reliability | Especially in complex workflows |

Conclusion

Both Chain of Thought Prompting and Prompt Chaining are powerful prompt engineering strategies, but they serve different purposes.

-

Use Chain of Thought when you want deeper reasoning, logic, and transparency.

-

Use Prompt Chaining when building multi-step workflows or automation pipelines.

-

Combine both for advanced AI applications — like chatbots, summarizers, or agents.

In short:

CoT = Think smarter.

Prompt Chaining = Work smarter.

Chain of Thought makes AI think better.

Prompt Chaining makes AI work smarter.

Sources and References

Prompt Chaining: https://www.promptingguide.ai/techniques/prompt_chaining

What is Prompt Chaining? https://www.ibm.com/think/topics/prompt-chaining

Chain-of-Thought (CoT) Prompting. https://www.promptingguide.ai/techniques/cot

What is chain of thought (CoT) prompting?. https://www.ibm.com/think/topics/chain-of-thoughts

You may also go through Mastering Prompt Structure & Format.